Low latency AI inference without compromises

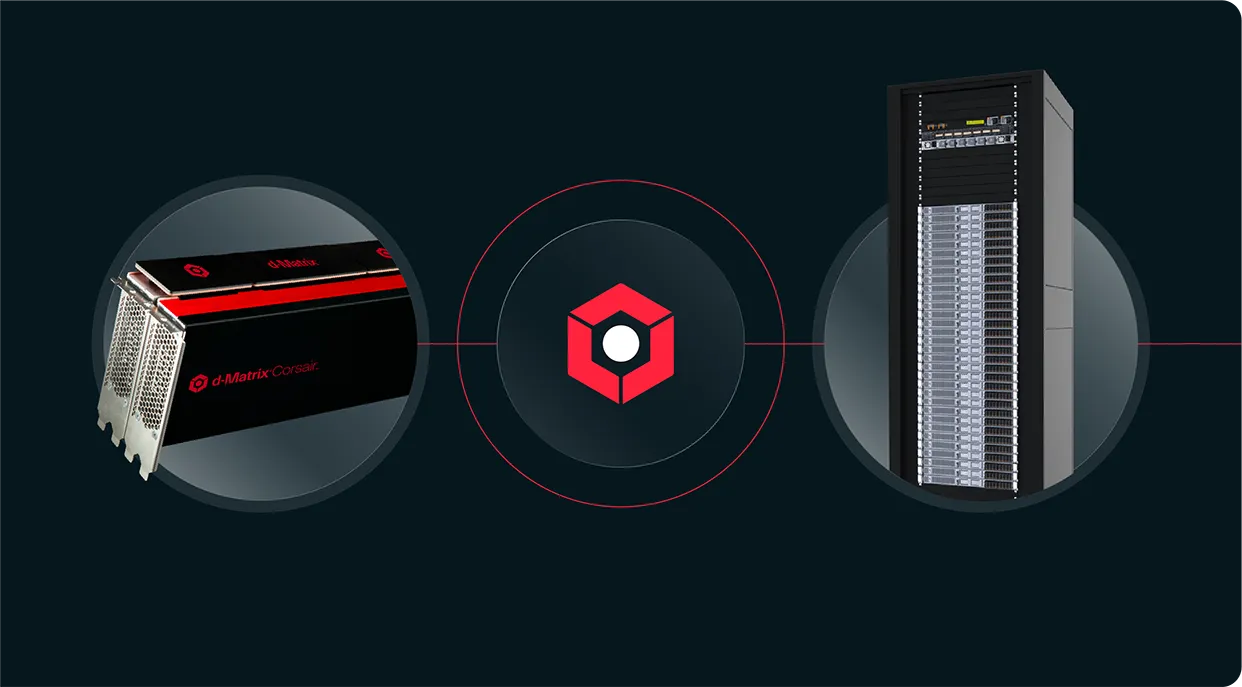

d-Matrix deploys revolutionary technology in memory-centric compute, next-generation I/O, and stacked DRAM solutions to power low latency AI inference at scale.

d-Matrix and Gimlet Labs to Deliver 10x Inference Speed Up and Power Efficiency

Corsair + GPU disaggregated pipelines deliver 10x the performance of a standard GPU-only pipeline.

A radically different approach to compute + memory

- Memory-centric approach prevents latency bottlenecks to deliver low-latency interactive applications.

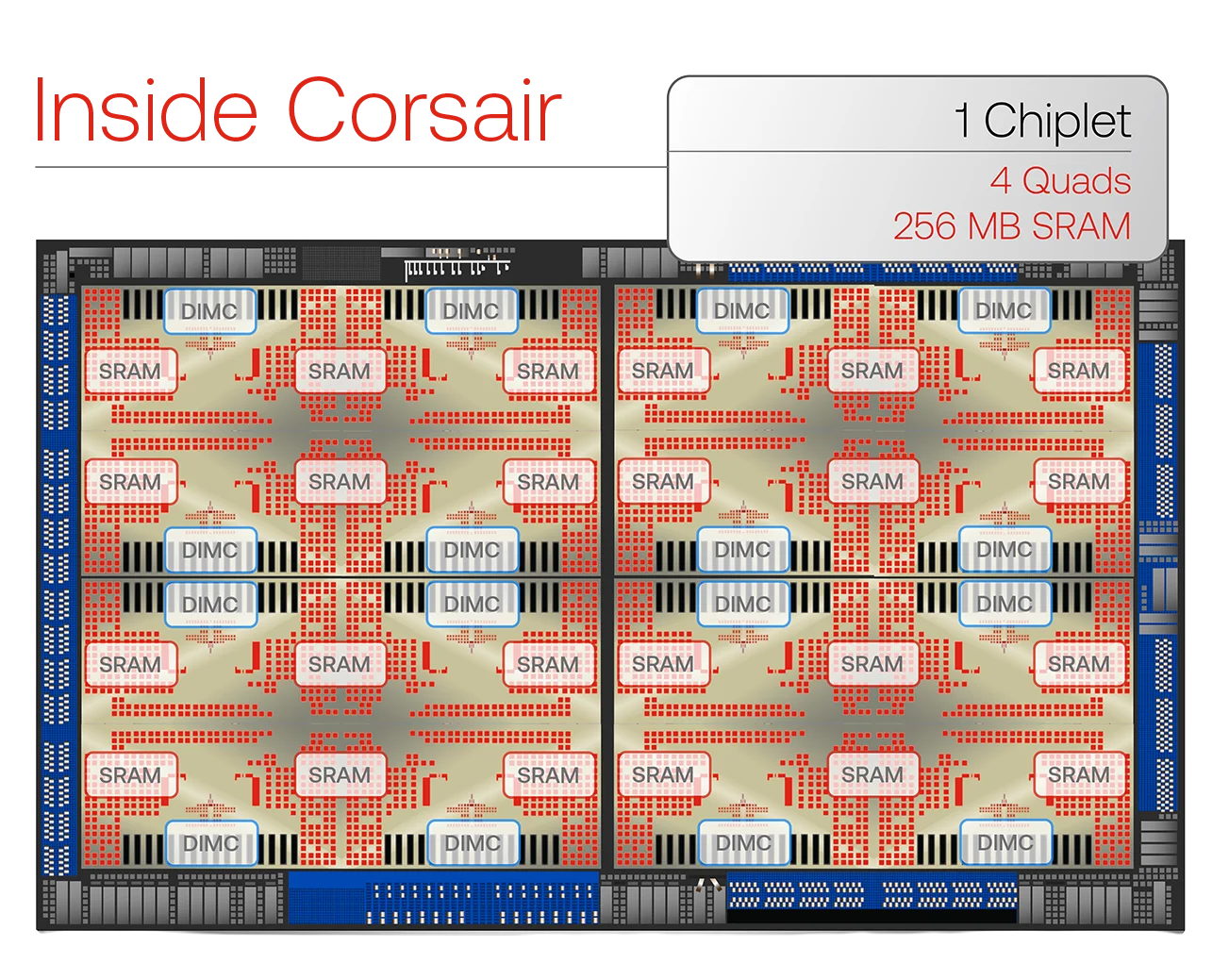

- Chiplet-based design enables scaling SRAM-based architecture to power models up to 100B parameters.

- PCIe form factor delivers instant results with existing data center configurations.

Rethinking AI Infrastructure with 3DIMC™

Memory is the real bottleneck in modern AI systems. d-Matrix’s 3D stacked digital in-memory compute (3DIMC™) architecture unlocks faster, more efficient inference at scale.

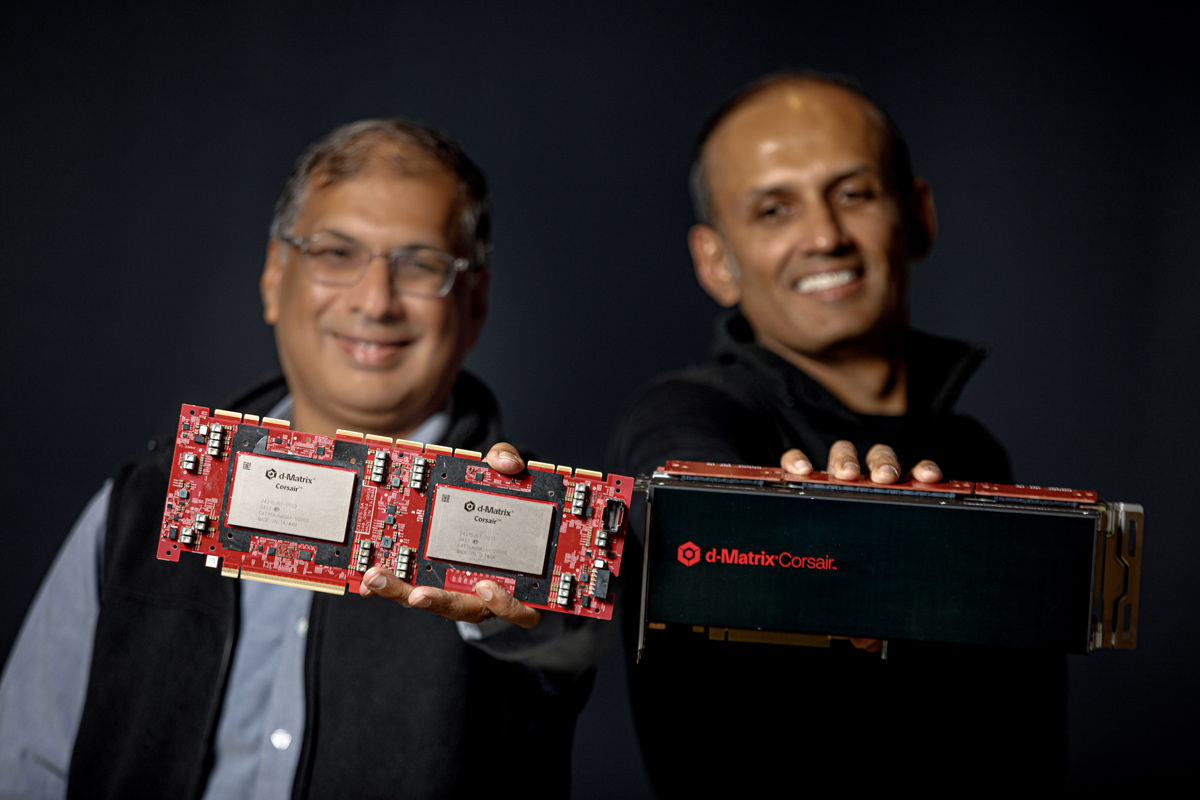

Who is d-Matrix?

d-Matrix

We’re industry veterans, who’ve shipped over 100 million chips. Years before generative AI started captivating imaginations, we were already at work— quietly making bold moves to take AI farther than anyone else.

Inspired by the visionaries, the ones who think different, who are dissatisfied with the status quo, who dare to dream a different future and then go ahead and build it.

Sure, we seemed like round pegs in a square hole, but that’s because nobody was able to see what we did.

Inspired by the visionaries, the ones who think different, who are dissatisfied with the status quo, who dare to dream a different future and then go ahead and build it.

Sure, we seemed like round pegs in a square hole, but that’s because nobody was able to see what we did.

Latest updates:

How accelerating feed-forward networks in disaggregated inference pipelines power next-generation AI